- Home

- Articles

- Architectural Portfolio

- Architectral Presentation

- Inspirational Stories

- Architecture News

- Visualization

- BIM Industry

- Facade Design

- Parametric Design

- Career

- Landscape Architecture

- Construction

- Artificial Intelligence

- Sketching

- Design Softwares

- Diagrams

- Writing

- Architectural Tips

- Sustainability

- Courses

- Concept

- Technology

- History & Heritage

- Future of Architecture

- Guides & How-To

- Art & Culture

- Projects

- Competitions

- Jobs

- Events

- Store

- Tools

- More

- Home

- Articles

- Architectural Portfolio

- Architectral Presentation

- Inspirational Stories

- Architecture News

- Visualization

- BIM Industry

- Facade Design

- Parametric Design

- Career

- Landscape Architecture

- Construction

- Artificial Intelligence

- Sketching

- Design Softwares

- Diagrams

- Writing

- Architectural Tips

- Sustainability

- Courses

- Concept

- Technology

- History & Heritage

- Future of Architecture

- Guides & How-To

- Art & Culture

- Projects

- Competitions

- Jobs

- Events

- Store

- Tools

- More

Midjourney AI vs DALL-E 4 for Architecture: Which Creates Better Renders in 2026?

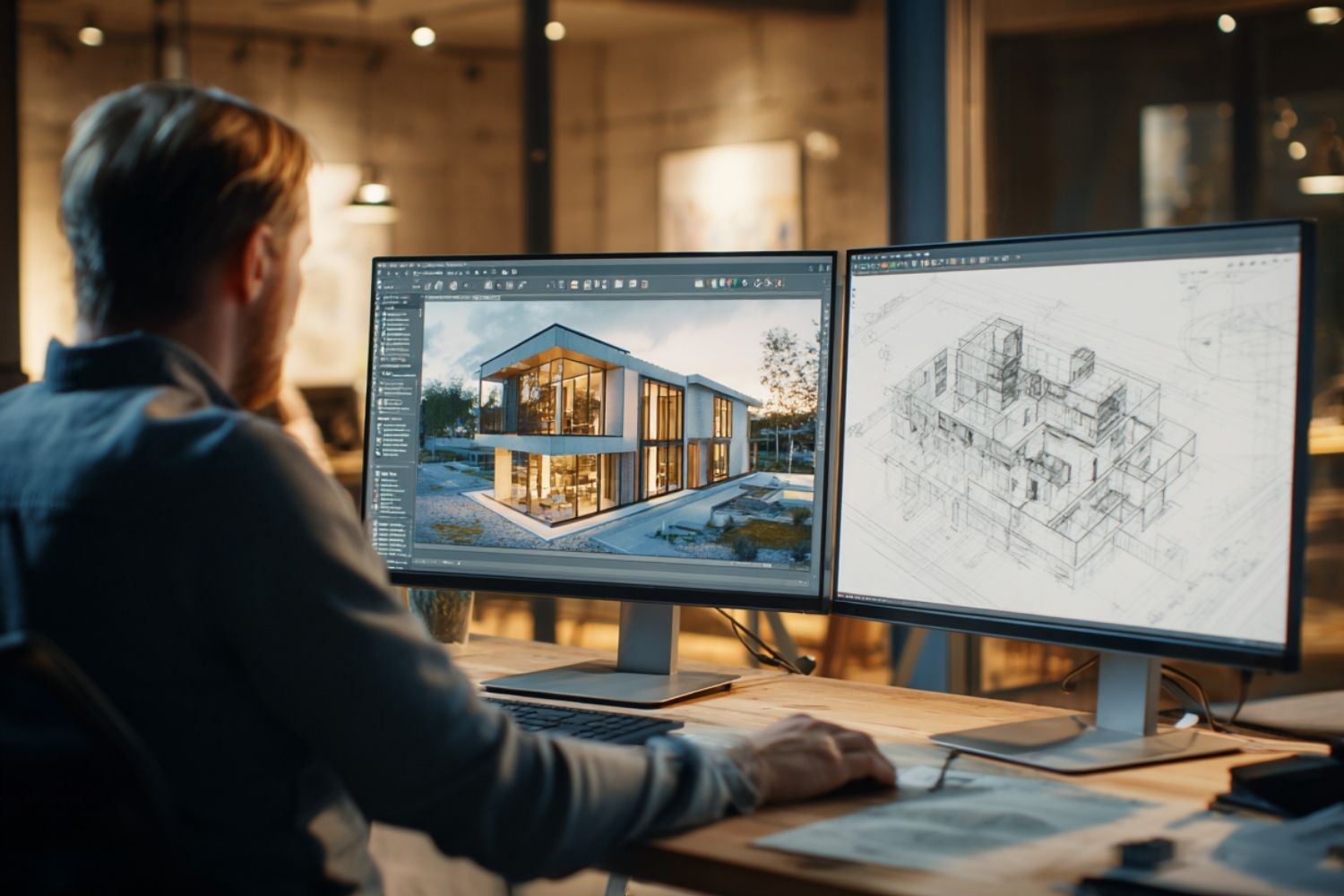

Midjourney and DALL-E 4 both generate impressive architectural visuals, but they serve different purposes in a design workflow. This comparison breaks down rendering quality, prompt control, ease of use, and real-world performance to help architects choose the right tool for their projects in 2026.

Table of Contents Show

- What Is Midjourney and How Do Architects Use It?

- What Is DALL-E 4 and How Does It Differ for Architecture?

- Head-to-Head Comparison: Midjourney vs DALL-E 4 for Architectural Rendering

- Which Tool Wins for Concept Design?

- Rendering Quality: Where Midjourney Still Leads

- Practical Workflow: How Architects Use Both Tools Together

- Limitations Neither Tool Has Solved

- Which AI Renderer Should Architects Choose in 2026?

Midjourney AI and DALL-E 4 are the two most widely used text-to-image generators among architects in 2026, each producing strikingly different results from the same prompt. Midjourney excels at atmospheric, mood-driven visuals with exceptional lighting and material quality, while DALL-E 4 delivers precise, prompt-accurate renders that integrate seamlessly with ChatGPT workflows. The right choice depends entirely on your project stage and presentation goals.

What Is Midjourney and How Do Architects Use It?

Midjourney is an independent AI image generator accessed through Discord, built around a model trained to prioritize visual quality, artistic coherence, and atmospheric richness. For architects, it functions as a powerful concept exploration tool. Feed it a descriptive prompt about material palette, lighting conditions, and spatial mood, and it produces visuals that often look closer to high-end architectural photography than a standard render.

Architects at firms including Zaha Hadid Architects have used Midjourney to generate early-stage design references and explore aesthetic directions before any 3D modeling begins. The platform lets designers test material combinations, massing ideas, and atmosphere without touching Revit or Rhino. That speed is its defining advantage at the conceptual stage.

The Midjourney image generator works through a Discord bot interface where users type prompts and receive four image variations. From there, you can upscale, vary, or remix outputs. The v7 model released in April 2025 significantly improved spatial coherence, hand accuracy, and material realism in architectural imagery.

💡 Pro Tip

When prompting Midjourney for architectural renders, front-load the camera position and lighting conditions before describing the building itself. A prompt structured as “wide shot, late afternoon sun, south facade, concrete and glass residential building with deep overhangs” consistently outperforms vague descriptions. The platform responds strongly to environmental context, not just object descriptions.

What Is DALL-E 4 and How Does It Differ for Architecture?

DALL-E 4 is OpenAI’s latest dall-e ai model, integrated directly into ChatGPT and accessible through the API. Unlike Midjourney, it approaches image generation with a stronger emphasis on literal prompt adherence. When you specify oak cladding, a flat roof, and morning light, DALL-E 4 attempts to deliver exactly those elements rather than interpreting the scene through an artistic filter.

This makes the dall e ai image generator particularly useful for client presentations where specific design decisions need to be communicated accurately. If a client asks to see their building with aluminum louvers on the west facade, DALL-E 4 is more likely to render that specific feature than Midjourney, which tends to interpret prompts creatively rather than literally.

DALL-E 4 also has a substantial advantage in text rendering within images. Signage, wayfinding, labels on drawings, and any typographic element in a scene are handled with far greater accuracy. Midjourney still struggles with legible text, often producing garbled characters that require editing in post-production.

🎓 Expert Insight

“Using Midjourney, the fluid nature of the AI along with a large number of Zaha Hadid images in the training data makes it particularly well-suited for projects that reference that visual language. Prompt engineering goes far beyond typing a sentence or two — it requires understanding of how the model interprets weight, modifiers, and iterative variation.” — Chhavi Mehta, Architectural Assistant, Zaha Hadid Architects

This points to a critical truth for any architect adopting AI tools: the model’s training data shapes what it does well. A tool trained heavily on a specific architectural style will perform differently from one trained on diverse global examples, and architects should factor this into their tool selection.

Head-to-Head Comparison: Midjourney vs DALL-E 4 for Architectural Rendering

Comparing these two platforms across the criteria architects actually care about reveals a clear pattern: neither tool is universally superior. Each dominates in specific contexts. The following comparison table breaks down where each performs best.

Midjourney vs DALL-E 4: Feature Comparison for Architects

The table below summarizes how each platform performs across the key criteria relevant to architectural visualization:

| Criteria | Midjourney v7 | DALL-E 4 |

|---|---|---|

| Visual atmosphere | Exceptional — cinematic, mood-driven | Good — clean and accurate |

| Prompt adherence | Interpretive — prioritizes aesthetics | High — follows specifications closely |

| Lighting and materials | Outstanding — understands material texture and light | Competent — accurate but less nuanced |

| Text rendering in image | Poor — often garbled | Excellent — clear and accurate |

| Ease of access | Discord interface — learning curve | ChatGPT integration — intuitive |

| API availability | No public API (as of 2026) | Full API access via OpenAI |

| Best project stage | Concept ideation, competition mood boards | Design development, client briefings |

| Pricing (starting) | $10/month (Basic plan) | Included with ChatGPT Plus ($20/month) |

Which Tool Wins for Concept Design?

For early-stage conceptual work, Midjourney has no real peer in the AI image generator market. Its ability to produce images that feel resolved and atmospheric from minimal input makes it an ideal sketchbook replacement. Architects working on competition entries or pitch visuals consistently reach for Midjourney when the goal is to evoke a feeling rather than specify a detail.

Andrew Kudless, architect and professor at the University of Houston, captured this well when speaking to Fast Company: the advantage of Midjourney lies precisely at the beginning of a project when you are dreaming about what something could be. That conceptual freedom is not a bug, it is the whole point.

DALL-E 4, by contrast, performs better once design decisions have been made and the goal is to visualize them accurately. If you have chosen a specific cladding system, defined the massing, and want to communicate a particular design intent to a client, DALL-E 4’s literal prompt interpretation is an asset rather than a limitation.

📌 Did You Know?

A 2025 peer-reviewed study published in Buildings (MDPI) compared DALL-E, Midjourney, and Stable Diffusion for generating architectural imagery of mindful architecture. Using natural language processing analysis on the generated outputs, researchers found that each model encoded a distinct “vocabulary” of spatial and material characteristics — meaning your choice of AI tool shapes not just how the building looks, but what architectural ideas it subtly communicates.

Rendering Quality: Where Midjourney Still Leads

In terms of raw visual impact, Midjourney v7 consistently produces images that architects describe as closer to high-end architectural photography than standard renders. The model’s training gives it a nuanced understanding of how light behaves on different materials — the soft scatter across raw concrete, the sharpness of reflections on glass, the warmth absorbed by timber. These are qualities that take significant effort to achieve in traditional render engines like V-Ray or Lumion.

DALL-E 4 produces cleaner, more controlled outputs, but they tend to read as precise rather than evocative. For a competition board where a single hero image needs to stop the jury mid-flip, Midjourney’s visual richness carries more weight. For a technical presentation where the client needs to understand the proposed fenestration pattern, DALL-E 4’s accuracy serves the purpose better.

This distinction is explored further in illustrarch’s comprehensive guide to the 25 best AI architectural rendering tools in 2026, which positions each platform within a broader ecosystem of specialized tools.

🔢 Quick Numbers

- Midjourney v7’s May 2025 update improved hand and geometry coherence by approximately 30% compared to v6 (Midjourney release notes, 2025)

- DALL-E 4 outputs reach resolutions up to 1536 x 1536 px, compared to Midjourney’s base outputs around 1024 x 1024 px (OpenAI API documentation, 2025)

- Over 50 million creators globally use Midjourney or DALL-E regularly, across fields ranging from architecture to marketing (Vertu, 2025)

Practical Workflow: How Architects Use Both Tools Together

Many experienced architecture studios no longer treat Midjourney and DALL-E as competitors but as tools for different phases. A typical workflow starts with Midjourney for concept mood boards, generating multiple directions quickly to identify the design language the team wants to pursue. Once a direction is confirmed, DALL-E 4 handles the more specification-driven visualizations that go to clients or consultants.

From there, tools like Rendair or Veras take over once a 3D model exists, using ControlNet-style geometry inputs that anchor the render to actual building proportions. This three-stage approach — Midjourney for mood, DALL-E for specification, BIM-integrated tools for accuracy — reflects how professional practices are structuring their AI workflows in 2026.

For architects who want to go deeper on prompting strategies for Midjourney, the Mastering Midjourney Architecture handbook published by illustrarch covers theme words, architectural style modifiers, rendering style references, and lighting prompts in detail. It is freely available as a PDF resource.

💡 Pro Tip

If you are using DALL-E 4 inside ChatGPT, take advantage of conversational iteration. After generating an initial image, you can ask “keep the same massing but replace the facade material with weathered corten steel and reduce the window-to-wall ratio” and the model will carry context from the previous output. This conversational refinement is not possible in Midjourney’s Discord interface, where each prompt starts fresh unless you use seed referencing.

Limitations Neither Tool Has Solved

Both platforms share common limitations that architects should understand before committing to either as their primary visualization tool. Neither produces outputs that are accurate enough to replace geometry-anchored rendering for technical presentations, construction documentation, or permit submissions. A Midjourney image looks stunning but has no structural logic — stairs may float, proportions may drift, and structural elements may be physically impossible. DALL-E 4 is more disciplined, but still generates imagery, not architecture.

Privacy is also an active concern. Midjourney images are public by default unless you subscribe to a Pro or Mega plan with Stealth Mode enabled. For early-stage designs on sensitive projects, generating visuals through a platform where all outputs are visible to the community is a significant confidentiality risk. DALL-E 4 through ChatGPT defaults to private outputs, which makes it safer for professional use in that regard.

Copyright and ownership questions also remain unresolved for both platforms. The industry is still working through questions about training data, commercial rights, and what it means to generate an image using a model trained on existing architectural photography.

⚠️ Common Mistake to Avoid

Many architects present Midjourney renders to clients as if they represent actual design proposals. They do not — they represent a mood and an aesthetic direction. Treating AI-generated images as stand-ins for technical renders creates client expectations that the actual building may not match. Always label AI-generated imagery clearly as conceptual, and transition to geometry-based tools as soon as design decisions solidify.

Which AI Renderer Should Architects Choose in 2026?

The decision comes down to what you need the image to do. If the goal is to capture a design idea, inspire a client, win a competition, or explore an aesthetic direction quickly, Midjourney is the stronger tool. Its visual quality at the conceptual stage remains unmatched among general-purpose AI image generators.

If the goal is to communicate a specific design decision accurately, embed text, integrate with a ChatGPT workflow, or generate images programmatically through an API, DALL-E 4 is the more practical choice. Its prompt adherence and accessibility lower the barrier for teams less experienced with AI prompting.

Architects who are serious about AI-assisted visualization should treat both tools as part of a broader toolkit rather than choosing one permanently. The architecture and design industry is also producing specialized tools — AI rendering platforms built specifically for architectural workflows — that address limitations both Midjourney and DALL-E share when precision matters more than mood.

For a deeper look at how Midjourney has evolved as a design tool and how AI is reshaping the early stages of architectural practice, the illustrarch guide to Midjourney’s video update and AI landscape design covers recent developments worth following. Additional context on how AI tools fit into the broader visualization ecosystem is available through ArchDaily’s analysis of AI and creative tasks in architecture and the RIBA’s guidance on Midjourney for architects.

For technical reference on DALL-E 4 capabilities and API access, the OpenAI image generation documentation is the authoritative source. For Midjourney, the official Midjourney documentation covers prompt parameters, model versions, and subscription options in full detail.

✅ Key Takeaways

- Midjourney AI produces the most visually compelling architectural renders for conceptual and competition work, with superior lighting, material texture, and atmospheric quality.

- DALL-E 4 excels at prompt adherence, text rendering, and ChatGPT integration — making it more reliable when specific design elements need to be communicated accurately.

- Neither tool replaces geometry-based rendering for technical presentations. Both generate images, not architecturally accurate models.

- The most effective professional workflow uses both tools at different design stages, with Midjourney for concept and DALL-E 4 for design development communication.

- Privacy, copyright, and accuracy limitations apply to both platforms and should be clearly communicated when sharing AI-generated images with clients or consultants.

Mechanical engineer engaged in construction and architecture, based in Istanbul.

Submit your architectural projects

Follow these steps for submission your project. Submission FormLatest Posts

AI for Architects: How Small Studios Compete with Big Firms Using SaaS Tools

AI for architects, delivered through affordable SaaS tools, is changing the competitive...

AI Render Workflow 2026: How Architects Are Saving 14+ Hours Every Week

Architects using a structured AI render workflow in 2026 are reclaiming 14...

Top AI Tools Transforming Architectural Design Workflows in 2026

The leading AI tools for architectural design in 2026 span four core...

5 Best AI Plugins for Revit, Rhino, and SketchUp in 2026

A curated breakdown of the best AI plugins for Revit, Rhino, and...

Leave a comment